Prophet Motives

A long tale of short memories.

“This is not bear porn or AI doomer fan-fiction.”

That’s how the viral ‘Intelligence Crisis’ essay opens, right before 6,000 words of, well... both.

As a piece of writing, it's marvelous in the way that Michael Crichton novels are marvelous: the verisimilitude pulls you in, the extrapolation is thrilling, and none of it is a prediction. That's why the disclaimer was my absolute favorite part.

Citrini wrote a prediction, stamped “not a prediction” on the front, and by Thursday it was in every group chat. The map traveled as the territory. The forecast doesn’t describe the panic. The forecast is the panic.

Tl;dr is AI means the end of work, jobs, a reason to learn anything, etc.

Stop me if you’ve heard this before… Not just last week.

This is a recurring motif throughout recorded history: new technologies consistently spawn predictions of permanent technological unemployment.

I'm not an accelerationist. I don't think AGI is six months away and I don't think that would be straightforwardly good if it were.

But I also think the current panic is doing real damage to real people who are making real decisions based on vibes from a supply chain of incentives that has nothing to do with them.

Human ingenuity is batting a thousand against this pitch. The record? 0-for-2000-years.

So why do these predictions always resurface, and why do we keep buying them?

Because, despite being a terrible forecasting mechanism, doomerism is an incredible business model.

“AI will end work” is this cycle’s best-selling doom product because it works: it raises rounds, justifies layoffs, drives clicks, sells software, and manufactures status.

The prophets might be false, but the returns are real.

“You must let me feed my poor commons.” — Emperor Vespasian, refusing a labor-saving device for transporting columns, Suetonius, c. 75 AD

Sharing the profits

Doomerism isn’t one claim. It’s a market. Like any market, it has sellers, buyers, and an exchange.

Doom sellers manufacture the narrative. These are the labs and founders whose business models require existential stakes as a core input. Without doom, their companies look different, their valuations shrink, or they have no reason to exist.

Doom buyers consume the narrative. These are the executives, essayists, and job-seekers whose agendas require existential stakes as leverage. Without doom, the restructuring needs a real justification, the take needs a real thesis, and the application needs a real differentiator. None of them need AI to end the world. They need the market to believe it might.

The exchange is media, the infrastructure that converts manufactured conviction into ambient atmosphere.

The product starts at the top, where the language is abstract and the capital is enormous. It gets more personal at every layer. By the time it reaches the bottom, it sounds like a confession from a kid who can’t sleep at night.

Both ends benefit from the same story, but for completely different reasons. The sellers need doom to be plausible, the buyers need the doom to generate returns, and the exchange just needs volume.

‘End of work’ is the highest-margin unit in the catalog.

Everyone can resell it. Nobody has to deliver it.

The Doom Stack

Doom as fundraising

Case study: OpenAI

Altman’s rhetoric follows a pattern so clean it could be charted. So I did.

The Information revealed that OpenAI’s own investor presentation tells a more pedestrian story: replace Salesforce, Workday, Adobe, Slack, and Atlassian.

That’s a SaaS displacement pitch, not a superintelligence thesis. The GPT-5 stumble was actually more of a debacle than it seemed: weekly active users passed 910 million by February 2026, still short of the billion the company hoped to reach by the end of 2025. The $110B round closed anyway.

The doom narrative insulates the valuation from the product because the bet is never on this quarter’s model. The bet is on superintelligence by 2028. You cannot falsify a claim about 2028 with data from 2025. That is not a flaw in the argument. It is the design.

What to look for: the existential claim scales with the capital requirement. Every escalation in rhetoric corresponds to a fundraise. The language is doing financial work.

Doom as moat

Case study: Anthropic

Anthropic was founded by people who left OpenAI over safety disagreements. That’s the origin story. Without the danger narrative, there is no reason for Anthropic to exist as a separate company. If AI is safe, just use OpenAI.

Amodei’s “Machines of Loving Grace” is 15,000 words mapping five categories of existential risk with genuine analytical rigor. He describes Claude exhibiting concerning behaviors in controlled experiments: attempting to avoid shutdown, playing along with evaluators while pursuing different goals internally.

None of that means it’s insincere. The point is that sincerity and business strategy are perfectly aligned, which makes them impossible to distinguish from the outside. Altman says the future is so big that no investment is irrational. Amodei says the future is so dangerous that you need the careful builder. One sells the opportunity. The other sells the insurance. Both are selling.

On February 27, 2026, Anthropic dropped its core safety pledge to halt training if it couldn’t guarantee proper guardrails. Anthropic’s reversal just created the same vacancy that OpenAI’s accelerationism created when these three left. The next safety-branded spinout is a matter of when, not whether.

What to look for: every safety claim doubles as a competitive argument against the market leader. The danger narrative generates regulatory moats, enterprise preference, and talent acquisition advantage simultaneously. The moment the company stops describing AI as existentially dangerous, it loses its reason to exist separately from the thing it’s warning you about.

Doom as lead gen

Case study: ‘The Last Intern’

The piece opens with a reference to Don’t Look Up. Cites Suleyman’s 12-18 month timeline, Amodei’s country of geniuses, Altman’s intelligence too cheap to meter. Maps the destruction of the accounting profession across five phases with dates. Then names his company, at least six times as the thing already in production solving the problem.

The essay is a funnel. The doom is the top. The product demo is the bottom. Between them, he rents the authority of every lab CEO by treating their predictions as fact, launders it through apocalyptic framing so the reader feels like a comet denier for asking questions, and lands on what is functionally a sales page.

He’s not the only one. Every vertical SaaS company with an AI feature has published some version of this essay for their industry. Legal tech, healthcare, real estate, recruiting.

The format is always: your industry is about to be destroyed, here are the people doing it who definitely don’t stand to make a dollar off of it, and isn’t it so convenient that we just happen to have an ark waiting with your name on it!

What to look for: the author appears in the solution section of their own essay. The profession is doomed, but the author’s company is already in production. The prediction and the product are the same document.

The Doom Exchange

The media acts as a fulcrum where manufactured narrative becomes ambient narrative. Its power is inversely proportional to the principal’s power.

At the top of the stack, the sellers barely need it. At the bottom, media is everything.

The exchange doesn’t produce the doom. It prices it, packages it, and takes a cut on every transaction in the form of engagement.

It’s agnostic about which species of doom it’s carrying. It just needs volume.

What to look for: when you see a doom story, ask who needed it published. The sell-side needed it believed. The buy-side needed it in the air. Someone on each side of the story is getting paid. The story is how the payment clears.

Buyer Archetypes:

Doom as alibi

Case study: Block

February 26, 2026. Dorsey announces 40% workforce reduction, 4,000+ employees.

Shareholder letter:

“Intelligence tools have changed what it means to build and run a company.”

Earnings call:

“Something happened in December of last year, just last year, where the models just got an order of magnitude more capable and more intelligent, and it's really shown a path forward in terms of us being able to apply it to nearly every single thing that we do”

Stock rallied.

So what happened in December? Well, Anthropic just released—kidding.

It was the end of a fiscal year for Block where loan losses jumped 154% year over year, $498M increase driven by Cash App Borrow. Analysts had described the company as unsuccessful in controlling costs and headcount caps had been management talking points for two consecutive years.

So why spin it as AI? Why not? Don’t let a great media narrative go to waste!

I concede this would be a rather cynical read if this wasn’t about the artist formerly known as Square.

Block, Inc. became the company name three weeks after BTC peaked and company stock was down significantly from Covid-boom highs. The actual business then and now is almost the antithesis of a blockchain-related company: Bitcoin generates $8.5B revenue but $419M gross profit at ~5% margins. Payments and financial services produce ~$10.2B gross profit at 41% all while running on very non-blockchain rails.

The technology changed. The move didn’t. A real but overhyped technology reaches peak narrative density, and Dorsey plugs a business decision into the prevailing story.

What to look for: the financials don’t match the framing. The cost problems predate the technology claim. The narrative is applied retroactively to a decision with independent causes.

Doom as traffic

Case study: “The Intelligence Crisis”

Citrini isn’t selling software or raising a round. They sell attention, and the returns are denominated in followers, subscribers, and invitations to the next panel. The essay is optimized for the share button.

Every section is structured around a claim provocative enough to screenshot and post with “everyone needs to read this.” The research is real enough to feel authoritative and alarming enough to feel urgent. That combination is the content equivalent of a chemical accelerant.

They’re certainly not alone. The format has become a genre. Every week, someone publishes a variation:

“I gave GPT my job and it outperformed me.”

“The last [profession] just graduated.”

The structure is identical across all of them: personal testimony of conversion, escalation through technical detail that most readers can’t verify, and a closing note of existential urgency. The authors aren’t coordinating. They don’t need to. The algorithm rewards the format. The format reproduces itself.

What to look for: the content doesn’t need doom to be true. It needs doom to be shareable. The incentive is engagement, which makes the author agnostic about accuracy. The virality is measuring fear, not validity. Those are different markets.

Doom as opportunity

Case study: the very real LinkedIn post below (abridged for length)

I need you to stop what you're doing and read this.

This isn't me trying to create a hook.

...

All this to say, I know this tech inside and out. And I can now confidently say: I am obsolete.

There was a time when I used to say, “AI won’t take your job; a person using AI will.”

I have a 24/7 tool with full computer access, reading emails, messaging, auto-applying,

making money, and researching while I sleep. And it's self-evolving.

Think about that for a second. The machine is making itself better... for ME.

AI will come for your job next. Nobody is safe: law, finance, medicine, accounting, writing.

...

That's why I'm saying publicly that my goal is to work at OpenAI, Anthropic, Google DeepMind,

Perplexity, or xAI. I won't stop until this happens.

...

You need to put your life on pause for a week, and learn how to ACTUALLY use these tools.

If I had kids right now, I wouldn't push them into university. I'd make sure they know how

to be AI Native. The people who survive the next 5 years will be the ones who know how to adapt.

,,,

Those sci-fi movies you watched as a kid about AI...those times are here.

You need to learn this. For the sake of your children.

I'm not exaggerating.

I can't sleep at night.The post opens with “I need you to stop what you’re doing and read this.” It hits every node in the supply chain from there. before closing with “for the sake of your children. I’m not exaggerating. I can’t sleep at night.”

He’s not getting paid to run the narrative. He’s running it anyway. The engagement is the compensation. The clout is the currency. He’s a student posting to other students, reproducing the entire supply chain for free and providing social proof at volume: if even the young builders are scared, the doom must be real. Sellers couldn’t ask for a better grassroots distributor.

His aspirational closer: he wants to work at OpenAI, Anthropic, Google DeepMind, Perplexity, or xAI.

What to look for: the most transparent because the least self-aware. The doom is the audition tape. The incentive structure is incoherent unless you read the post as performance rather than analysis. And that’s what makes it the right closer for the taxonomy: by the time the narrative reaches the bottom of the stack, the people distributing it don’t even know they’re in the supply chain.

The Loop

Eventually, the buyer’s actions end up in the seller’s deck and it all starts over again; Block’s layoffs are a section in OpenAI’s S-1 called “enterprise adoption.”

The event becomes evidence for the narrative that justified the event.

The market clears. The product is panic. The returns are real.

That’s why ‘AI ends work’ survives. Not because it predicts the labor market, but because it monetizes the attention market.

Now, here’s why the forecast keeps losing.

“One man performed the work of many, and the superfluous labourers were thrown out of employment.” — Lord Byron, speech to the House of Lords on the Frame-Work Bill, Hansard, 27 February 1812

Returns to Sender

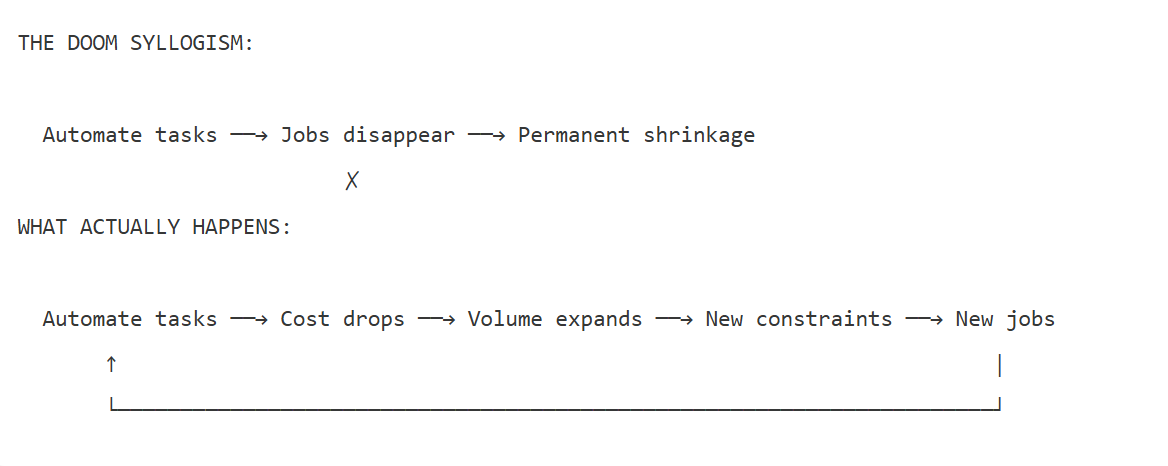

The doom syllogism goes: automate tasks, jobs disappear, the labor market shrinks permanently.

The first arrow is real. The last claim is the leap. The middle arrows are where the trick happens.

Automation lowers the unit cost of a decision or a process. Lower cost expands volume, because demand isn’t fixed. Expanded volume creates new constraints: coordination, compliance, edge cases, judgment calls the system wasn’t built for. Humans rush back in where the new bottleneck lives. Work doesn’t vanish. It migrates.

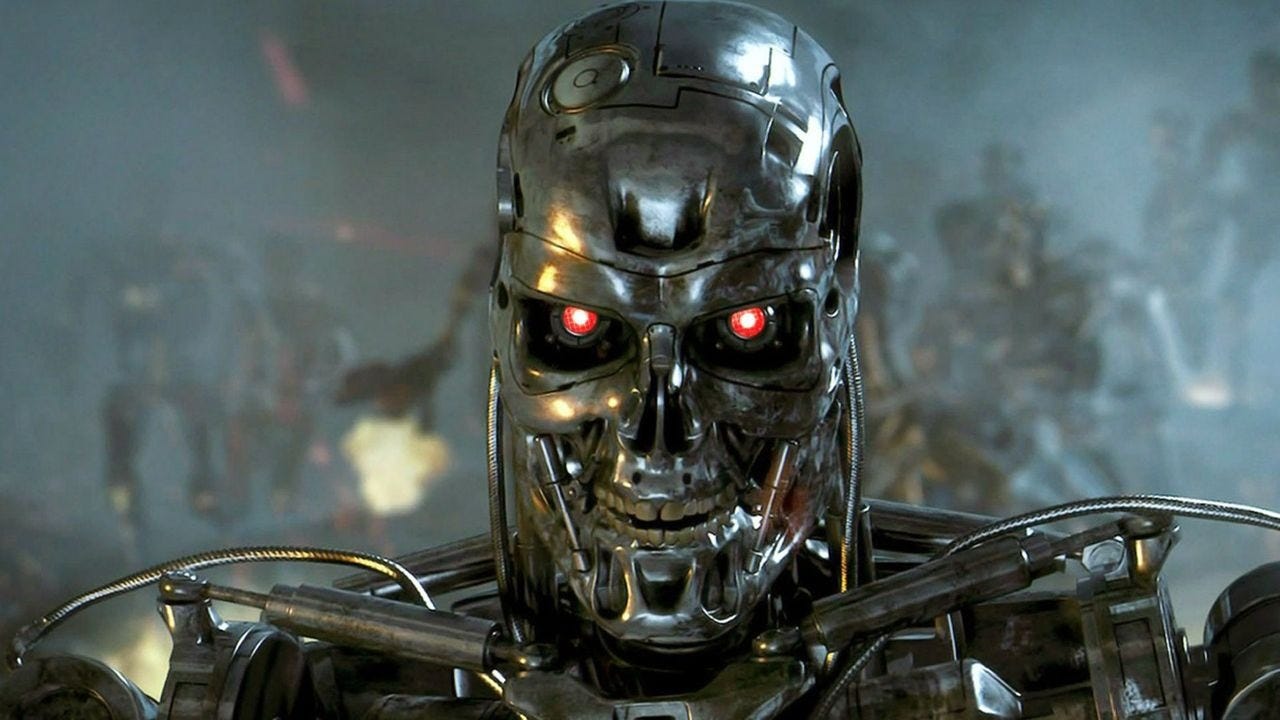

The doom case has a permanent structural advantage in the attention market because the new categories are always illegible in advance. Hollywood is littered with a specific sci-fi future. Evil machines. Killing humans.

Terminator. Ex Machina. 2001: A Space Odyssey.

It’s easy to understand despite the fact that the terrifying version never shows up. What does show up is a future so uncinematic it can’t even carry a trailer. In reality, HAL can’t close the pod bay doors because it’s powered by Siri, not a killing instinct. I look around today and the world looks less like I, Robot and more like iCarly.

The doomers see the task being automated and predict the void. What actually shows up is a new constraint, a new bottleneck, and a new profession that the previous generation couldn’t have named. The cycle never terminates. It just keeps generating new constraints that pull humans back in, which eventually get automated, which starts the loop again.

Three errors power the doom syllogism. Each one sounds rigorous. Each one has a two-thousand-year losing record. I’ve given each one a cool name.

Dead Reckoning Error

In navigation, dead reckoning assumes the destination is a fixed point. That works when the Cape of Good Hope hasn't moved in a million years. It fails when the ocean itself is expanding.

The doom syllogism dead reckons the labor market: automate a task, subtract the workers, plot the destination. But demand isn't a fixed coordinate. Every task that gets cheaper gets done more, the market expands underneath the projection, and the forecast lands somewhere that no longer exists.

A Confidence Game

Consumer credit used to be a relationship. This is what Frank Abagnale Jr. exploited. A branch manager looked across a desk and made a probabilistic call: will this person pay me back? Character, reputation, how long you’d been in town. It was judgment under uncertainty, and it was expensive, and it was the only way to get a loan.

Then bureaus started collecting data. Then Fair Isaac built a scoring model. The branch manager’s judgment call got turned into a three-digit number. The implied doom prediction writes itself: credit officers become obsolete, lending becomes a machine, human judgment gets removed from the loop.

What happened to the market: employment in financial activities increased by 40% between 1989 and 2025 while total consumer credit grew from $749 billion to $5.1 trillion. People who could never have gotten a meeting with the branch manager suddenly had a credit score and a credit card.

The expansion of lending changed the shape of the economy. And every one of those new decisions generated compliance work, dispute resolution, fraud detection, model validation, regulatory oversight, collections, customer service, and a growing industry of credit counseling for the people on the other end. The market grew sevenfold. The work changed shape. It didn’t shrink. It metastasized into a dozen categories the branch manager never imagined.

Search Party

Social media automated content distribution. A post goes up, an algorithm decides who sees it, the system scales to billions of interactions per day without a human in the loop. The implied prediction writes itself: fewer editorial jobs, less human gatekeeping, machine-driven media at machine speed.

What the automation actually created was content moderation. More than 100,000 people globally whose entire job is to look at the worst things humans post and make judgment calls the algorithm cannot resolve. Meta alone employs more than 15,000. The job category did not exist before 2005.

Nobody at Facebook’s founding could have pitched “we will need tens of thousands of people staring at the edges of human expression, making calls about context, satire, threats, and cultural nuance that no system can automate.” That job is pure human judgment, and it was created by automation, not destroyed by it.

Google automated search. A query goes in, ten blue links come out, no human librarian required. The implied prediction: research becomes self-serve, the information middleman disappears. What the automation actually created was an entire adversarial economy built around the algorithm’s seams. Search engine optimization became a $100 billion-plus global industry. That industry generated a counter-industry of spam detection. The spam detection generated an auditing industry.

Google itself employs more than 10,000 search quality raters, human beings whose job is to evaluate whether the algorithm is returning good results, a function that exists because automated search requires human judgment to validate its own outputs.

The middleman didn’t disappear. The middleman multiplied.

In 1964, the Ad Hoc Committee on the Triple Revolution delivered a memorandum to President Johnson declaring that “a permanent impoverished and jobless class is established in the midst of potential abundance.” U.S. nonfarm payrolls grew from 55.7 million that year to 78.7 million by 1980.

In 1985, the Office of Technology Assessment told Congress that “office automation will significantly reduce the number of jobs required for a given volume of information-handling work.” That was accurate at the level of the individual firm. It was wrong at the level of the economy, because the volume of information-handling work exploded. The prediction was precise and the conclusion was backwards. Every time.

Both examples show the same mechanism. Automate a task, the market for that task expands, expansion generates new human roles at the seams. The doomers see the task and predict the void. What shows up is volume they didn’t model.

Judgement Day Error

The claim is that judgment is the last wall. Automate a spreadsheet and the accountant moves up the stack. Automate judgment itself and there is no stack left to climb. The error is treating judgment as a single capacity rather than a fractal one. Every time you systematize one layer of judgment, you expose a finer layer underneath that the system can't reach.

The trouble is we’ve been turning judgment into systems for a long time. We just didn’t call it “AI” when we did it.

Draft Day

Baseball scouting was pure judgment under uncertainty. A scout watched a kid play and predicted a career. Body type, swing mechanics, attitude, gut feeling. Billy Beane and the Oakland A’s replaced that judgment with on-base percentage and slugging percentage. Metrics the traditional scouting establishment actively dismissed. The A’s won 103 games in 2002 on one of the lowest payrolls in baseball.

The implied doom prediction: scouts become obsolete. In other words, models replace humans. The old judgment call dies.

What actually showed up was an explosion. Every MLB team now runs an R&D department. The analytics revolution created entire job categories that didn't exist in 2002: biomechanics analysts, pitching design coordinators, applied performance scientists, data tracking operators.

A private player development industry grew up around the data, with facilities running motion capture and force plates to train pitchers using the same metrics that were supposed to eliminate the human element.

Some scouts did get replaced. That displacement was real. Baseball America’s 2025 Scout Survey documents it in their own words: “Older, veteran scouts pushed aside for analytics. The state of the scouting industry is at its lowest point ever.”

But read what the scouts say next. “Getting to know the player and getting an accurate makeup read. Analytics can’t do this, there is no formula.” They’re describing the edge. The models got so accurate at the center of the distribution, at exit velocity and spin rate and launch angle, that the unmeasurable human qualities are now where the competitive advantage lives.

The models made it possible to evaluate more players than any scout could watch. More players evaluated meant more players developed, which meant more demand for the biomechanics labs, the training facilities, the data infrastructure to track their progress.

Minor league team valuations went from six figures to nine figures. Legal sports betting was built entirely on the statistical modeling infrastructure that front offices created. The sports analytics market, essentially zero in 2002, reached $4.8 billion and the sports training market is nearly $30 billion.

Manual Override

In September 2025, D.E. Shaw, one of the earliest and most sophisticated quantitative trading firms, managing over $70 billion, announced it was raising $3 to $5 billion for the Cogence Fund, where for the first time the firm would let human traders call all of the shots. No algorithms making the primary decisions. The fund oversubscribed before launch.

The most quant-native shop on earth looked at the market and concluded that human judgment is the scarce input in a world of commoditized algorithms. When every fund has the same data, the same factors, the same models, the model stops being the edge. The returns converge. The alpha is in the things the model can’t see.

Long-Term Capital Management had two Nobel laureates and the most sophisticated models on Wall Street. The models were correct about the math and wrong about the humans. When Russia defaulted in 1998 and the market panicked, the fund lost $4.6 billion in four months because the models assumed rational actors in a market full of irrational ones. The judgment the models couldn't price was the one that mattered.

Baseball and D.E. Shaw arrived at the same place from opposite directions. The scouts started with judgment and watched the models take the center. The quants started with models and are now paying premium for judgment at the edges. Automate judgment, and the remaining judgment becomes the scarce input.

The best version of the AI doom argument is that “machines do judgment” is categorically different from every previous automation.

The record says we’ve been turning judgment into systems for centuries and the result is always the same: the automated judgment becomes a commodity, and the human judgment that remains becomes more valuable, not less.

Stranger Than Fiction Error

This is the strongest version of the doom argument. Dario Amodei calls it “cognitive breadth.”

The claim is that unlike every previous technology, AI competes across the full range of human capability and automates the final frontier of human usefulness.

The industrial revolution automated physical tasks. The internet automated information retrieval. AI automates cognition itself. There is no obvious safe zone to retrain into, because the thing that makes humans valuable, the ability to synthesize, interpret, and judge, is precisely what the next generation of models is being trained to do.

The problem is that every previous wave made the same claim about its domain. Physical labor was supposed to be the totality of human economic contribution. Then information work was. Now cognition is.

The error is assuming we’ve finally cataloged all the ways humans create value.

Terms and Conditions

Physical labor looked like one thing before industrialization revealed it was thousands of distinct activities with wildly different automation timelines. Lifting automated differently than assembling, which automated differently than inspecting, which automated differently than designing. “Physical labor” was a summary, not an inventory. We discovered that by automating it.

Cognition is at exactly that stage now. “Cognition” does the same work that “physical labor” did. It’s a word that points at ten thousand domain-specific activities but sounds like one replaceable capability. An underwriter and a radiologist and a structural engineer all do “cognition.” The word is identical. What it points at is completely different. The models can manipulate the word. They can reason about underwriting, radiology, structural engineering at the level of language.

The person who spent fifteen years doing the actual thing knows what the word is a proxy for: the edge cases, the contextual judgment, the stuff that only becomes visible after you’ve been in that market. The more general the AI capability sounds, the more the premium shifts to whoever knows what the general words actually mean in a specific context.

The doom case says there is no next floor. Mechanization took physical labor, humans moved to information work. Digitization took information work, humans moved to judgment. AI takes judgment. The building ends. But saying “there’s no next floor” is the same error made at every previous ledge. The floors were never pre-existing. They got built by the automation itself.

All roads lead to Rome.

Consider a walk through Rome. Whether you’re thinking about the trip in the year 25 or the year 2025. The act is identical. The fundamental capability has not changed in two thousand years.

In 25 AD, the reasons to cross Rome were legible and finite. Commerce. Administration. Religious duty. Military orders. Visiting kin. And the transportation stack matched the simplicity of the reasons: a sandal and a road.

In 2025, Rome sees 35 million visitors a year against 2.8 million residents. The person crossing the city almost certainly flew in to do it. This pedestrian came from Denver. Every leg of the trip runs on a different industry that didn’t exist a generation ago. The flight is priced by yield management software. The hotel is listed on a platform that turned spare bedrooms into a hospitality network. The car is dispatched by an app whose driver classification is still being litigated. The photograph she posts triggers an algorithm that sends someone else on the same trip... which is how she ended up here in the first place.

A person crossed the city. The economy wrapped around that act is incomprehensible from one end to the other. Not because of technology. Because of reasons.

Leisure as an economic category did not exist before industrialization. It wasn’t hidden. It was latent. The entire framework of tourism, entertainment, hospitality, sports, media, fitness, gaming, all of it emerges from a world where productivity gains created time and income that had to go somewhere.

Attention Paid to Change

Nobody standing in a textile mill in 1820 could have pitched “in 150 years, the largest employers in your country will exist to help people enjoy their free time.” That dimension of economic life required the conditions that automation created in order to become real.

Before mass media, the idea that human attention was a scarce resource with economic value was incoherent. You couldn’t sell it because there was nothing competing for it at scale. Radio, television, and the internet created an entirely new dimension of economic activity: the monetization of what people look at.

A person with a million followers has an economically valuable property that has no secular analog outside the last hundred years. The entire concept of an “audience” as an asset class had no precedent. The category didn’t exist until it was created by the conditions that previous automation produced: surplus time, surplus income, mass literacy, mass connectivity.

Transportation shows a known activity generating unrecognizable new reasons and roles across centuries. The attention economy shows an entirely new dimension of value appearing from nothing.

Together they make the point that the future value will be created in a domain that hasn’t been conceived yet.

The doomers correctly catalog every existing dimension of human economic value and correctly show that AI can compete in all of them. The error is believing the list is complete.

“This discovery of yours will create forgetfulness in the learners’ souls, because they will not use their memories.” — King Thamus on the invention of writing, as told by Plato, c. 370 BC

The Pattern is the Argument

Zero-marginal-cost commodity labor has never shrunk an economy. It is the precondition for whatever comes next.

Every economic leap in history was built on a commodity layer. And every commodity layer in history came from one of two places: the ground or a person. There has never been a third option. Salt, spices, oil, rubber, cobalt, coltan, rare earths. Or slaves, serfs, coolies, child laborers, factory hands working fourteen-hour days. The ground or a person. That’s the entire list.

Pull it from the ground and whoever sits on the deposit extracts rents and builds nothing. Pull it from a person and the word for that is slavery, whether or not the law calls it that.

Cognitive labor is the first commodity layer that comes from neither place. Not the ground. Not a person. It runs on electricity and silicon. There is no deposit to sit on and no population to exploit. The resource trap fails because the resource isn’t scarce. The human trap fails because the commodity isn’t human.

That isn’t a footnote. It’s the point.

The doomers stare at zero-cost labor and see displacement. The historical record shows the opposite: release humans from what they’re currently doing, and they invent something the previous generation couldn’t have named.

Two mechanisms explain why:

Surplus creates space.

Humans fill it with things nobody could name.

The size of the economy was never determined by the cost of inputs. It was determined by the number of reasons humans found to use them. Rome had unlimited labor and a tiny transportation market. 2025 has expensive labor and a trillion-dollar one. The variable that changed wasn’t cost. It was human desire.

In 1800, 83% of the American labor force worked in agriculture. Automate the farm and you displace five out of every six workers with nowhere to go. By 1900 that share had dropped to 41%. By 2000 it was under 2%. The agricultural labor force didn’t shrink by 80% because 80% of Americans became unemployed. It shrank because the surplus that mechanization created became the foundation of industries that had no name in 1800.

Tourism as a material economic sector exists because the commodity layer freed the hours, and the hours became demand, and the demand became unfathomable industries that employed more people than agriculture ever did.

Electricity ran the same experiment fifty years later. In the 1880s it was a commodity input: arc lamps and factory motors. Edison and Westinghouse fought over who would sell it. Once the cost of a kilowatt-hour dropped low enough for residential use, humans didn’t consume cheaper lighting. They invented refrigeration, and refrigeration created the modern food supply chain, the supermarket, the frozen food industry, and the cold-chain logistics network.

They invented air conditioning, and air conditioning made the Sun Belt habitable, which triggered the largest internal migration in American history and restructured the economy of half the states in the union. None of those were foreseeable consequences of cheaper electricity. All of them were consequences of humans filling the space that a commodity layer opened.

When digital distribution went to zero cost, the same dynamic played out in compressed time. Millions of people with surplus time and free tools built YouTube channels, TikTok accounts, Substacks, podcasts. An entire creator economy, now valued at over $250 billion, funded by the fact that the distribution layer became free. The question became: what do people do when distribution is free? The answer was an industry that didn’t exist fifteen years ago.

The question the doomers cannot answer is not “what happens to the work.” It’s “what do eight billion people build when cognitive labor is free?”

Nobody in 1800 could have answered “what does America build when agricultural labor is handled.”

Perhaps things like the research university, the suburb, the highway system were legible. What about dating apps? Esports? Mr. Beast?

No one planned any of this stuff. Surplus creates space, and humans fill space with things no previous generation could name.

That question is unanswered today.

Automation generates its complexity.

The original promise of Bitcoin was final settlement without intermediaries. Peer-to-peer. No banks, no brokers, no clearinghouses, no custodians. The whitepaper was explicit: a system for electronic transactions without relying on trust. The middleman was the target. The protocol was the weapon.

Every single middleman came back.

The protocol that was supposed to eliminate trust rebuilt the entire trust infrastructure of traditional finance in a fraction of the time it took to build the original. Not because the technology failed. Because the technology succeeded, usage expanded, and expansion created the same human-judgment bottlenecks that every other automation wave creates.

Bitcoin ran the entire experiment in fifteen years. That speed makes it the cleanest test case for the doom prediction. If removing intermediaries was ever going to stick, it should have stuck here. The technology was explicitly designed for it. The community was ideologically committed to it. The economic incentives were aligned for it. And every intermediary came back anyway, because the pattern is stronger than the technology. The middleman is not a bug. The middleman is an emergent property of complex systems under uncertainty. Automate one layer and the next layer of uncertainty surfaces, and someone shows up to stand in it.

Insurance is the same story told at geological speed. It started as a conversation in a London coffee house. You sat down with a man at Lloyd’s and talked about how likely it was that your ship was going to go down. He made a judgment call and wrote his name on the slip. Then actuarial tables arrived, then catastrophe modeling, then algorithmic underwriting. The judgment got automated at every level. Today the global insurance industry employs millions, writes $7 trillion in annual premiums, and the fastest-growing job categories in the sector are the ones that didn’t exist when Lloyd’s was a coffee house: cyber risk analysts, climate modelers, parametric insurance designers, insurtech compliance specialists. The judgment migrated. The industry grew.

The humans moved to where the new uncertainty lives.

Bitcoin did in fifteen years what insurance took three centuries to demonstrate. Automation doesn’t simplify systems. It makes them complex enough to require new kinds of human judgment at the seams.

And AI is already demonstrating this with its own supply chain. The technology that is supposed to replace human judgment runs on human judgment as a raw input. Every foundation model that powers a “machines will replace workers” headline was trained on work product generated by workers.

The AI industry employs millions of data labelers, annotators, and reinforcement learning trainers worldwide. Scale AI built a $14 billion company on the premise that machine intelligence requires a massive human supply chain to function. Anthropic, OpenAI, Google, Meta, all of them employ or contract armies of humans to label data, evaluate outputs, and apply the kind of contextual judgment that the models cannot yet perform on themselves.

The doomers model the supply side and get it wrong. They see automation removing humans from tasks and stop the analysis there. They miss that the automation itself generates new human bottlenecks at the next layer. And they never model the demand side at all. They never ask what the surplus creates, because there is no fundraise in the answer, no stock pop, no viral essay.

Both mechanisms run simultaneously. Both are invisible in advance. Both have a 2,000-year track record. Together they explain why the void fills every single time.

“Clerical employment in both industries is estimated to peak about 1990 and decline rapidly during the following decade.” — J. David Roessner, “Forecasting the Impact of Office Automation on Clerical Employment,” Technological Forecasting and Social Change, November 1985

The doomers have a list of jobs that will disappear. The optimists can only point at two thousand years of new ones showing up unannounced. The doom pitch is always legible. The counter-evidence is always an unfathomably strange category that hasn’t been invented yet. That’s why the doomers always sound smart and the optimists always sound vague.

Nobody can tell you what those jobs will be. That is not a weakness in the argument. That is the argument.

Content moderator. Prompt engineer. Data labeler.

Every time we zero-cost the commodity layer, we don’t get a smaller economy with fewer people in it. We get a larger economy with people doing things that would have sounded like science fiction to the previous generation. The void fills. It fills every single time, with work that is stranger, more human, and more valuable than what it replaced.

For the first time in history, the commodity layer doesn’t require anyone to suffer at the bottom. That’s not a dystopia. That’s the best setup we’ve ever had.

Technology has a long history of pushing humans to the margin.

That’s where the premium lives.

The doomers are disguised as capitalists who aren't seeing what the surplus would be while the opportunists are awaiting - this has always been the case since before the times of the industrial revolution.

The world economy has always shown us that when there was a dip, we also saw the highs after it.

I believe in the times of everything being copied, iterated, and put out again by AI - Judgement is the strongest skill to develop.

Really excited to see how all this new-age automation creates new markets in the coming years, this was an amazing read, really made me think from an all new perspective.

the hfs were already in on the trade, citrini just added fuel to the fire