Making Sense of the AI Headlines

A note for our LPs

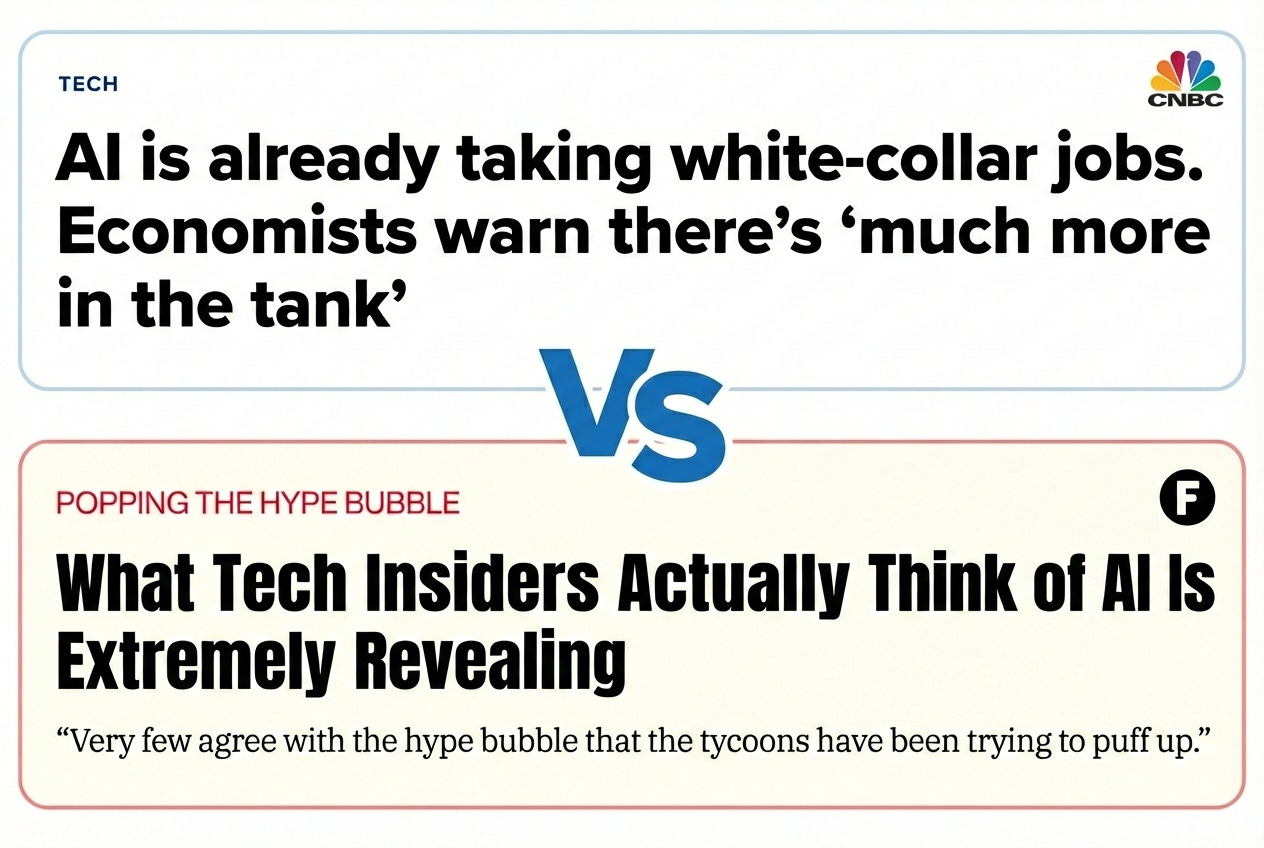

You’re seeing two stories at once. Salesforce says AI handles half its workload. Amazon says it’ll avoid hiring 160,000 people by 2027. Entry-level roles are disappearing. And then the same week: OpenAI’s co-founder says autonomous agents “just don’t work.”

Both are true. They’re describing different parts of the same picture.

What AI is actually good at

AI is very good at tasks that look like: take this input, apply these rules, produce this output. Summarize this document. Extract these fields from this form. Write a first draft of this email. Answer this question if the answer is in this PDF.

A lot of jobs were mostly this. Not all of them, but enough of them that companies are noticing. The entry-level analyst who spent 40 hours a week pulling data into slides. The customer service rep reading from a script. The associate who summarized contracts. These jobs existed because the work had to get done and humans were the only option.

Now there’s another option. The work still gets done. The headcount doesn’t.

Where AI hits a wall

AI breaks down when the task requires judgment that can’t be reduced to pattern-matching. When the answer isn’t in the document. When the next step depends on context that wasn’t explicitly provided. When you need to know what question to ask, not just answer the question you were given.

The technical term is “multi-step reasoning,” but the simpler version is: AI can’t tell when it’s wrong. It produces output with total confidence whether it’s right or not. This is fine when a human reviews everything. It falls apart when you try to chain tasks together and let it run.

This is why “agentic AI” (bots that autonomously handle workflows) keeps failing in production. The demos look great. The deployed systems require constant human cleanup.

The gap where all the confusion lives

So: AI can do a lot of the tasks that used to require a human. AI cannot do the job of figuring out which tasks to do, or catching its own mistakes, or handling the case that doesn’t fit the pattern.

Companies are cutting the task-doers. They still need the people who scope the work, check the output, and deal with exceptions. In some cases they need more of those people, because AI produces more output that needs review.

The headlines make it sound like AI is replacing workers. What’s actually happening is AI is replacing a certain kind of work, and the jobs that were mostly that kind of work are disappearing. The jobs that were partly that kind of work are changing. The jobs that were none of that kind of work are fine.

Why the margins won’t last

Right now, companies are pocketing the difference. Fire the humans, keep the AI, enjoy the margin expansion.

This never lasts. Humans have a habit of finding their way into any exchange of money. The work doesn’t disappear, it moves. Implementation consultants. Prompt engineers. AI ops teams. Quality assurance. Exception handling. Customer success for the customers who can’t figure out the bot.

The first company to cut headcount gets a margin advantage. The tenth company to cut headcount is just at parity. And somewhere in between, a new class of work emerges to fill the gap between what AI can do and what customers actually need.

Routine tasks get automated, judgment does not. Most jobs are a mix. The ratio determines what happens to them.